The EU Artificial Intelligence (AI) Act, ratified by the European Council on May 21, 2024, stands as a comprehensive framework for AI governance, encompassing 180 recitals, 113 Articles, and 13 annexes. This legislation, extending its reach beyond EU borders, imposes risk-based regulations affecting developers, exporters, and implementers within the AI ecosystem. Covering general-purpose AI (GP AI) models, high-risk AI systems, and low-risk AI systems, while outlawing specific AI practices, the Act spans over 400 pages and aims to establish a global benchmark for AI regulation. Enforceable 20 days post-publication in the EU Official Journal, with most provisions taking effect 24 months thereafter.

The AI Act applies to all AI systems introduced into the EU market or utilized within its borders, irrespective of the provider’s location. General-purpose AI models, capable of diverse tasks and applications, fall within its purview, excluding those designated for research alone. Exceptions are granted for AI systems dedicated to military, defense, or national security purposes, as well as those earmarked for scientific research endeavors.

The Act delineates a staggered implementation timeline, with the majority of regulations becoming enforceable 24 months post-publication. Exceptions include immediate bans on prohibited practices (effective in six months), establishment of codes of practice (effective in nine months), and application of general-purpose AI rules (effective in 12 months).

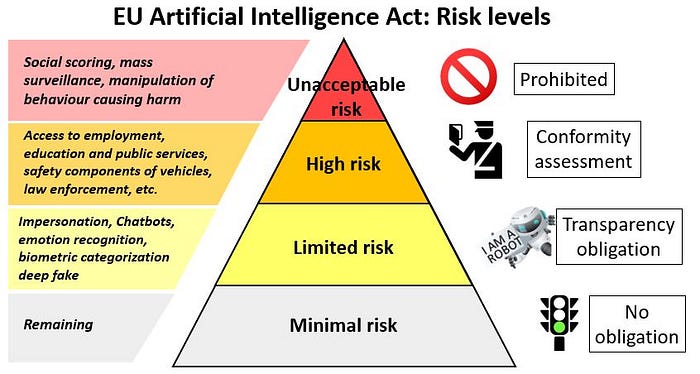

The Act adopts a risk-based approach, categorizing AI systems into four tiers: Unacceptable Risk, High-Risk, Limited Risk, and Minimal Risk. Unacceptable risk AI system are not welcomed they include systems that purposefully manipulate or use deceptive techniques; exploiting vulnerabilities related to age, disability, or socio-economic status; generating social scores for groups or individuals; predicting potential criminal behavior similar to predictive policing; creating facial recognition databases through indiscriminate data scraping; inferring emotions in workplace or educational settings; categorizing biometrics based on race, political views, sex, etc., and generating real-time biometric identification in public spaces for law enforcement purposes. Stringent obligations are imposed on high-risk systems, such as biometric identification, critical infrastructure management, employment processes, and electoral influence, measures implemented for high risk systems includes training data governance, human oversight, risk management, impact assessment, safety requirements, mandatory registration, and pre-market conformity assessments. Limited-risk systems, including those involved in emotion recognition and biometric categorization, are subject to transparency obligations, while providers of minimal-risk systems like AI video games and spam filter are encouraged to adhere to voluntary codes of conduct.

In terms of model approaches, the Act identifies general-purpose AI models with high-impact capabilities, measured by computational thresholds exceeding 10²⁵ floating-point operations, as posing systemic risks. Such models necessitate adversarial testing, systemic risk assessments, cybersecurity measures, incident reporting, and confidentiality obligations. Open source AI models, excluding monetized variants, enjoy exemptions if non-systemic in nature, otherwise, they are regulated akin to proprietary systems.

In terms of technical documentation, implementation, and compliance: providers and users must maintain exhaustive technical documentation and ensure alignment with the AI Act and EU copyright laws. Exceptions are granted to open source models under free licenses, provided they disclose requisite details. Mandated cooperation with authorities, adherence to approved standards, and a human-centric approach, emphasizing fundamental rights and robust risk management, are integral facets of compliance.